There is a useful test to determine if a data type is truly accessible to intelligence. Ask whether an AI system can not only read it but also build with it.

Language passes this test. A language model not only analyses text but also creates, transforms, translates, summarises, and reasons with written language as its primary material. The same applies to code. The same applies to structured data, mathematics, and audio in its transcribed form.

Video does not pass this test.

This is not a matter of model capability. The models are not the problem. The problem is 40 years older than the models, and it lives in the file format itself.

The Architecture of a Rendered Video File

To understand why video is locked, you first need to understand what a rendered video file actually is.

A rendered video file is the product of a compression and encoding process that fuses two things together that, conceptually, are entirely distinct: the structural description of a sequence (which frames appear in what order, for how long, with what transitions) and the physical media content itself (the actual encoded pixel data for each frame).

Once these two things are fused, the file becomes, in a technical sense, atomic. You cannot reach inside it without extracting the media content. You cannot modify the sequence without re-rendering the surrounding frames. You cannot access a specific segment without either duplicating the whole file or creating a derivative.

This architectural decision made complete sense in the context in which it was made. Video was designed to be played back and distributed. You needed a file you could put on a tape, then a disc, then a server, then a CDN, and have it play from beginning to end. Fusion of structure and content served that purpose perfectly.

It is catastrophically unsuited to a world in which intelligent systems need to build with video.

How Data Types Have Been Made Accessible to AI

To understand the significance of what Video Virtualisation represents, it helps to trace the history of other data types that have been made accessible to intelligence.

Numerical data was the first to be liberated.

Early computing was built around the manipulation of numbers. The structure was always there: numbers have inherent addressability. The challenge was storage and processing. As those constraints relaxed, numerical data became the native currency of computation.

Text was the transformative unlock of the information age.

The moment text was stored as addressable characters rather than images of characters, everything changed. Text became searchable. Text became composable. Text became the raw material of the internet.

The transformation took decades, from early word processors to search engines to large language models, but the architectural foundation was always the addressability of the character.

Structured data became accessible to intelligence through the invention of the relational database in the 1970s. Edgar Codd’s foundational insight, that data should be describable in terms of relations rather than physical storage locations, created the conditions for every application built on a database that has existed since. When data became relational, intelligence could query it, join it, transform it, and reason with it.

Code has always been data, but its accessibility to intelligence is a very recent development.

The emergence of large language models trained on code repositories has made programming languages a native medium for AI reasoning. An AI system can now read, write, transform, and debug code with genuine sophistication.

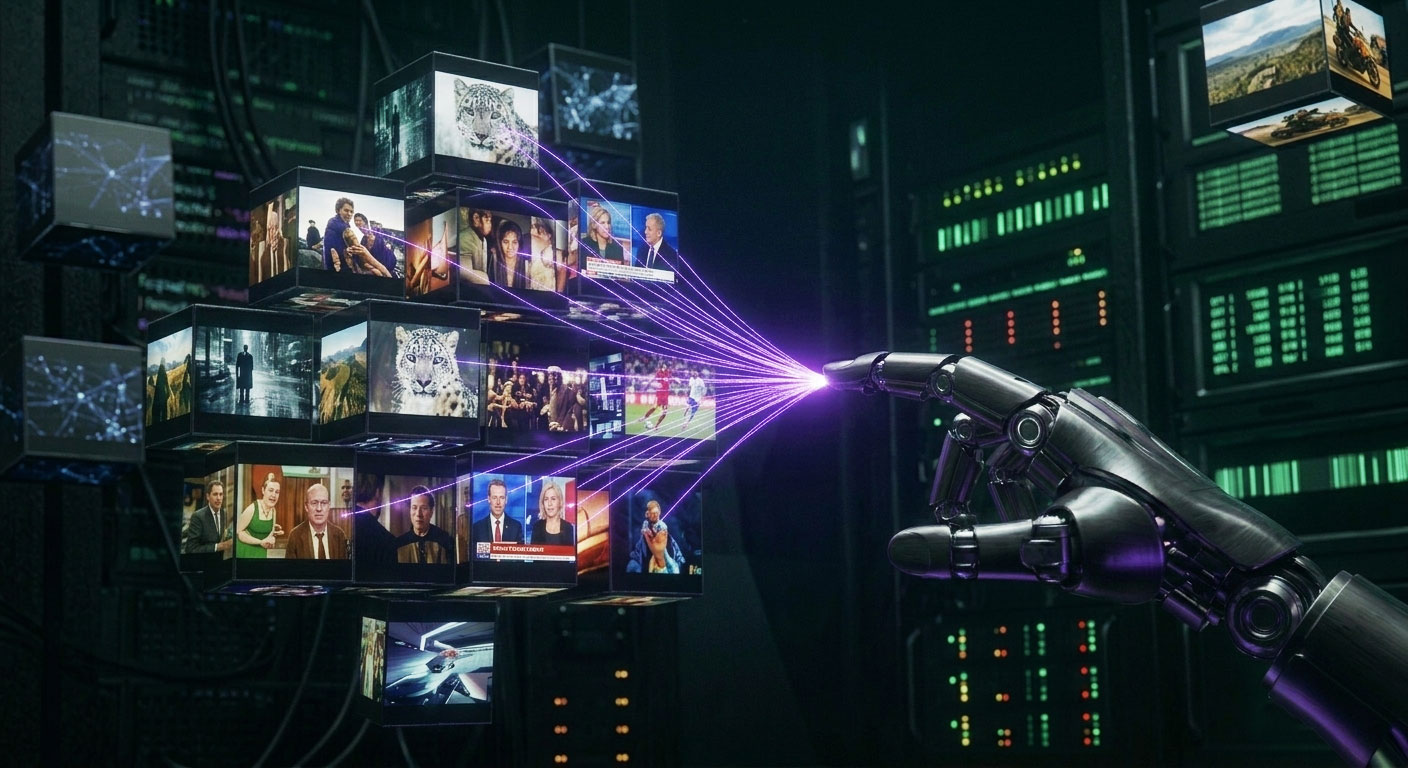

Video has not been through this transition. It is the last major data type that remains architecturally inaccessible to intelligence.

The Scale of the Problem

To appreciate what this means, consider the scale of the data involved.

Video is the largest data type on the internet by volume. Estimates suggest that video accounts for more than 80% of all consumer internet traffic. Enterprise video archives, broadcast libraries, surveillance footage, medical imaging, sports footage, training data, and user-generated content collectively represent more recorded information than all other data types combined.

All of it is locked.

Every sports broadcast. Every news archive. Every training film ever recorded. Every security camera recording. Every interview, every presentation, every documentary, every surveillance feed.

Every piece of footage in the world right now exists in file formats designed for playback, not composition.

AI systems that need to work with this footage are doing so in the most inefficient way imaginable: they download rendered files, process them in their entirety, generate metadata, and store that metadata separately from the underlying media. The result is redundancy at enormous scale, processing costs that grow with archive size, and semantic understanding that cannot be reused without re-processing.

The moment a sequence needs to change, the entire rendering process begins again.

What Virtualisation Changes

Video Virtualisation does not make video files smaller. It does not make video faster to stream or cheaper to deliver. These are problems that CDNs and codecs have spent decades solving.

Video Virtualisation changes the architectural relationship between a video’s structure and its content.

The sheet music analogy is the clearest way to explain it. Sheet music contains no sound. It contains instructions. The instructions specify pitch, timing, dynamics, and relationships between notes. Any musician with the right instrument can perform those instructions differently. The same composition can be performed by a jazz quartet, a symphony orchestra, or a solo pianist. The instructions are infinitely composable. The media produced by performing those instructions is a consequence of the performance, not a property of the score.

A Virtual Video File is sheet music for video.

It contains no encoded media. It contains instructions: temporal mappings that specify where segments begin and end in the master source, segment boundary definitions that create addressable units within the footage, and assembly parameters that describe how segments compose into output sequences. The master source stays protected in existing storage, never duplicated, never re-encoded.

Two things become possible that were not possible before.

The first is persistent, reusable semantic understanding. The objects, scenes, speech, and moments within footage become queryable, addressable data, not as a separate index bolted onto the outside of a file, but as a persistent layer that lives independently of the underlying media. This semantic understanding can be queried, reused, and acted upon repeatedly without re-processing the source.

The second is composition as a data operation. New sequences are assembled in near real-time from the master source. A prompt instructs the system which segments to assemble, in what order, and with what parameters. The output is a Virtual Video File, not a new rendered file. A 52MB rendered source becomes a 49KB instruction set. The media is untouched. The structure is infinitely composable.

What the system produces when AI assembles video in this way is what ION calls Cognitive Video: dynamically composed, AI-driven video built from existing footage, delivered in near real-time without re-rendering.

What Becomes Possible

The question to ask about any infrastructure layer is not what it does, but what becomes possible when it exists.

When relational databases were introduced, nobody anticipated Wikipedia, Salesforce, or Airbnb. Those applications required a combination of the database layer, the internet layer, and the application layer. The database did not build them. It created the conditions for their construction.

Video Virtualisation is that kind of infrastructure event for the video medium.

AI agents that can compose personalised video sequences from existing footage, in real time, for individual viewers at scale: require this infrastructure layer.

AI systems that can retrieve frame-accurate segments from archives of millions of hours of footage, compose them with synthetically generated content, and produce outputs that blend real and generated video seamlessly: require this infrastructure layer.

Broadcast organisations that can dynamically assemble highlight packages, news packages, and personalised feeds from a single master archive without generating a single derivative file: require this infrastructure layer.

These are not incremental improvements on existing workflows. They are new categories of capability that cannot exist without the underlying infrastructure.

Video has been the last dark data type for 40 years. The architecture that unlocks it exists. The globally granted patents that protect it have been filed since 2008. The demonstration has been made.

The question now is what gets built on it.